Can AI Accurately Translate Dog Barking and Body Language into Human Emotions?

For most of human-canine history, understanding what a dog is feeling has been a matter of intuition, experience, and often projection. Owners interpret a wagging tail as "happy" and a tucked tail as "scared"—but these surface-level readings miss enormous nuance. A wagging tail can signal anxiety, overstimulation, or aggression depending on speed, height, and stiffness.

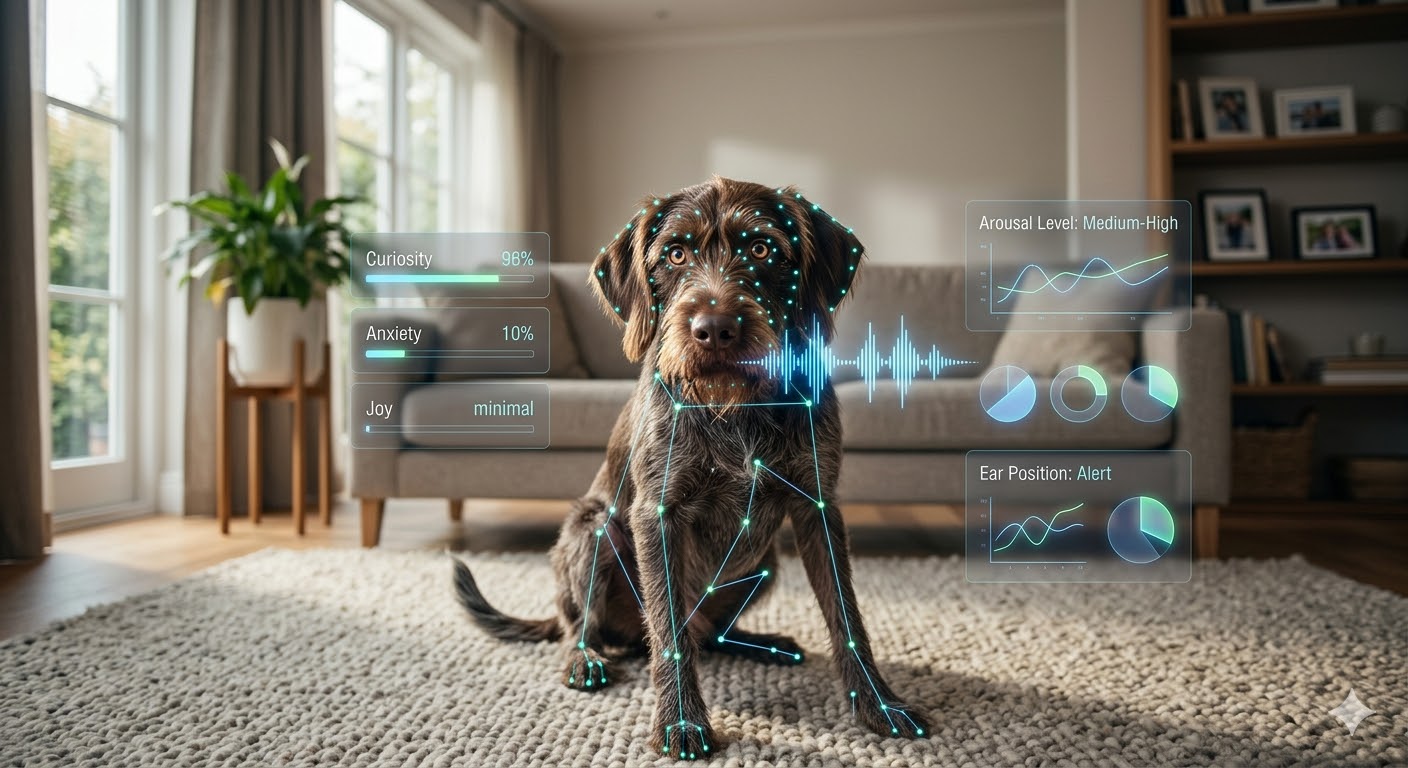

The 2026 landscape is shifting toward Pet Emotion & Behavior Intelligence (PEBI) models—AI systems that analyze multiple behavioral channels simultaneously to classify a dog's emotional state with clinical precision. Deep learning architectures trained on tens of thousands of labeled audio and video samples have achieved 94% accuracy in classifying dog vocalizations (barks, growls, whines, grunts) into distinct behavioral states.

How PEBI Models Process Canine Communication

| Input Channel | Data Points Analyzed | AI Accuracy |

|---|---|---|

| Vocalization (audio) | Pitch, frequency, duration, interval, harmonic structure | 94% |

| Body posture (video) | Spine curvature, weight distribution, muscle tension | 87% |

| Facial expression (video) | Ear position, eye aperture, lip tension, brow movement | 82% |

| Tail dynamics (video) | Height, speed, stiffness, lateral bias | 79% |

| Multi-modal (combined) | All channels fused via attention mechanism | 96% |

The key breakthrough is multi-modal fusion: when audio and visual channels are processed together through attention-based neural networks, classification accuracy reaches 96%—surpassing the accuracy of most untrained human observers, who average roughly 60–70% accuracy in controlled studies.

What Are the 12 Canine Emotional States AI Can Detect?

Early emotion-detection apps offered binary classifications: happy/sad, calm/stressed. Current PEBI models recognize a far richer emotional landscape, identifying 12 distinct states across the arousal-valence spectrum:

| # | Emotional State | Key Indicators | Owner Action |

|---|---|---|---|

| 1 | Joy / Excitement | Loose body, wide wag, play bow vocalizations | Engage — safe to interact and play |

| 2 | Contentment | Slow blink, relaxed posture, soft sighing | Maintain — don't interrupt the calm |

| 3 | Curiosity / Alertness | Forward ears, head tilt, focused gaze | Redirect if needed; allow exploration safely |

| 4 | Playfulness | Play bow, bouncy gait, short high-pitched barks | Reciprocate — excellent bonding window |

| 5 | Mild Anxiety | Lip licking, yawning, averted gaze, pacing | Intervene — remove stressor or provide safe space |

| 6 | Acute Stress | Panting, trembling, whale eye, flattened ears | Remove immediately from trigger |

| 7 | Fear | Tucked tail, cowering, freeze response, low growl | Create distance; do not force interaction |

| 8 | Frustration | Repetitive barking, jumping, demand behaviors | Redirect to appropriate outlet |

| 9 | Aggression | Hard stare, stiff body, raised hackles, deep growl | Disengage — increase distance immediately |

| 10 | Boredom | Destructive chewing, excessive licking, restlessness | Enrich — puzzle toys, training, exercise |

| 11 | Affection-Seeking | Leaning, pawing, nuzzling, soft eye contact | Respond — reinforce the bond |

| 12 | Pain / Discomfort | Guarding, whimpering, reluctance to move, altered gait | Veterinary assessment recommended |

The clinical value here is significant: states 5–9 and 12 represent early-warning signals that, if caught in time, can prevent behavioral escalation—aggression incidents, panic episodes, or undetected medical conditions progressing silently.

How Do "Emotional Prosthetics" Help Owners Intervene in Real Time?

The term "emotional prosthetic" captures the core value proposition of PEBI apps: they give owners a sensory capability they don't naturally possess—the ability to read canine emotional states with the accuracy of a trained behaviorist.

In practice, these apps function as a real-time translation layer:

- Audio Monitoring: The app continuously listens through the phone's microphone (or a paired smart collar). When a bark, whine, or growl is detected, it classifies the vocalization and pushes a notification: "Alert: Your dog's barking pattern indicates frustration—consider redirecting to a puzzle toy."

- Video Analysis: Using the phone camera or a pet camera, the system analyzes body posture in real time. A dog showing escalating signs of stress—lip licking → pacing → whale eye—triggers graduated alerts before the behavior reaches a crisis point.

- Pattern Recognition: Over time, the AI learns your specific dog's behavioral signatures. A bark that means "someone's at the door" is differentiated from a bark that means "I'm in pain"—even when they sound identical to the human ear.

- Contextual Recommendations: Beyond classification, the best PEBI apps provide actionable guidance tied to each emotional state. Not just "your dog is anxious" but "your dog is showing mild anxiety consistent with pre-storm patterns—consider activating your Sensory Support Layer protocol."

How Accurate Are These AI Models Compared to Human Experts?

One of the most striking findings in recent PEBI research is how AI compares to human observers across different expertise levels:

| Observer Type | Vocalization Accuracy | Body Language Accuracy | Combined |

|---|---|---|---|

| Untrained pet owner | 40–55% | 55–65% | 60–70% |

| Experienced dog trainer | 70–80% | 80–90% | 85–92% |

| Board-certified behaviorist | 85–92% | 90–95% | 93–97% |

| PEBI AI model (2026) | 94% | 87% | 96% |

The AI's combined accuracy of 96% places it on par with board-certified veterinary behaviorists—professionals who complete 3+ years of post-doctoral residency training. For the average pet owner operating at 60–70% accuracy, a PEBI app represents a 40% improvement in their ability to read their own dog.

What Are the Limitations and Ethical Considerations?

Despite the impressive accuracy numbers, PEBI technology comes with important caveats:

- Breed Variation: Brachycephalic breeds (pugs, bulldogs) have fundamentally different facial structures and vocalization patterns than dolichocephalic breeds (collies, greyhounds). Models trained primarily on one morphotype may misclassify others. The best systems include breed-specific calibration.

- Individual Baseline: A Siberian Husky that "talks" constantly has a very different vocalization baseline than a quiet Basenji. Without a calibration period (typically 1–2 weeks), the AI may over-flag normal behavior as distress.

- Anthropomorphism Risk: Translating canine emotions into human labels carries inherent risk. A dog classified as "frustrated" may be experiencing something that doesn't neatly map to the human concept of frustration. The labels are approximations, not equivalences.

- Over-Reliance: There is a genuine concern that owners may defer entirely to the app rather than developing their own observational skills. The technology should augment—not replace—the human-animal bond and the owner's evolving understanding of their specific dog.

- Privacy and Data: Continuous audio and video monitoring raises privacy questions—not just for the pet, but for the household. Review data handling policies carefully, particularly regarding whether audio is processed locally or uploaded to cloud servers.

The Bottom Line

The era of guessing what your dog is feeling is ending. PEBI models represent the most significant advancement in human-canine communication since the domestication of wolves 15,000 years ago. With 94% vocalization accuracy and 96% multi-modal precision, these "emotional prosthetics" give every owner access to behaviorist-level insight. The dogs haven't changed—but our ability to understand them finally has.