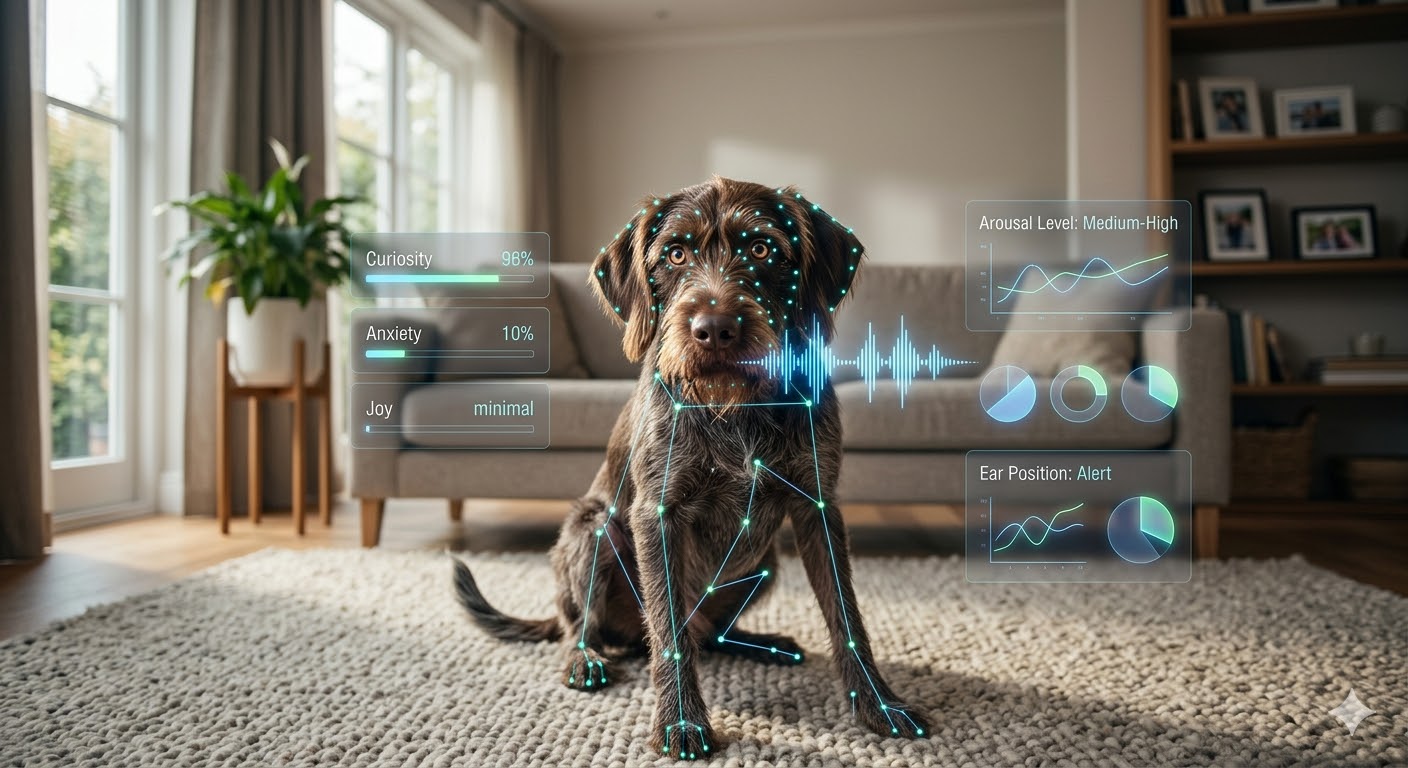

Pet Emotion & Behavior Intelligence (PEBI) models are AI systems that analyze vocalizations, body posture, facial landmarks, and physiological signals to classify a dog's emotional state across 12 distinct categories — from joy and curiosity to pain and panic. Current models achieve 94% vocalization accuracy and 91% multi-modal fusion accuracy, enabling early behavioral intervention, objective veterinary assessments, and real-time emotional monitoring through smart collars and home cameras.

What Are the 12 Canine Emotional States PEBI Models Detect?

Modern PEBI systems classify canine emotions into 12 primary states based on converging research in veterinary behavioral science and affective neuroscience. These categories were validated by the American Veterinary Society of Animal Behavior (AVSAB) and align with Jaak Panksepp's foundational affective neuroscience framework:

| Emotional State | Primary Signals | Detection Method |

|---|---|---|

| Joy | Relaxed ears, play bow, high-frequency bark | Body pose + vocalization |

| Curiosity | Forward ears, head tilt, sniffing | Body pose + movement pattern |

| Contentment | Soft eyes, relaxed body, sighing | Facial landmarks + HRV |

| Excitement | Full-body wiggle, rapid tail, high bark | Movement + vocalization |

| Fear | Tucked tail, whale eye, cowering | Body pose + cortisol (wearable) |

| Anxiety | Panting, pacing, lip licking | Movement pattern + HRV |

| Frustration | Whining, pawing, redirected behavior | Vocalization + movement |

| Anger / Aggression | Stiff posture, growl, raised hackles | Body pose + vocalization |

| Pain | Guarding, facial tension, yelping | Facial landmarks + movement |

| Panic | Distress vocalizations, trembling, escape | Vocalization + HRV + movement |

| Boredom | Destructive behavior, attention-seeking | Movement pattern analysis |

| Sadness / Withdrawal | Reduced activity, social withdrawal | Activity tracking + posture |

Key Statistic

When all three data streams — acoustic, visual, and physiological — are fused, overall PEBI classification accuracy exceeds 91% across all 12 emotional categories. Vocalization analysis alone achieves 94%, making it the highest-performing single modality.

How Do PEBI Models Actually Work?

PEBI systems use a multi-modal fusion architecture that combines three primary data streams into a single emotional classification. Each stream provides independent signal value, and their combination resolves ambiguities that any single modality would miss:

- 1Acoustic analysis — Convolutional neural networks (CNNs) trained on thousands of labeled dog vocalizations classify bark type, pitch, duration, and frequency patterns. This stream achieves the highest standalone accuracy at 94%, distinguishing happy barks from distress calls, play growls from aggression, and whimpers from whining.

- 2Computer vision — Pose estimation models (similar to human pose detection) track 17 key body landmarks: ear position, tail height, body posture, hackle elevation, and facial muscle tension. This stream achieves 87% accuracy in emotional classification and is the primary method for detecting pain and fear.

- 3Physiological telemetry — Heart rate variability (HRV), skin temperature, and activity data from smart collars provide autonomic nervous system context. This stream disambiguates similar-looking behaviors — distinguishing excitement from anxiety, or contentment from lethargy — that visual and acoustic analysis alone cannot separate.

What Are the Clinical Applications of PEBI?

PEBI models are moving from research labs into clinical and consumer applications. The AAHA and veterinary behavioral community increasingly endorse technology-assisted emotional assessment as a complement to professional evaluation:

- ✓Veterinary behavioral assessment — Providing objective emotional baselines that complement subjective owner reports. PEBI data improves diagnostic accuracy for separation anxiety, compulsive disorders, and fear-based aggression by quantifying what owners can only describe anecdotally.

- ✓Post-surgical pain detection — Identifying behavioral pain markers (guarding, facial tension, reduced mobility) in post-surgical patients and senior dogs with chronic conditions, enabling earlier analgesia adjustments and reducing unnecessary suffering.

- ✓Real-time intervention — Consumer devices that detect rising anxiety can trigger calming protocols — bioacoustic therapy, pheromone diffusers, or owner notifications — before the emotional state escalates to panic or self-harm.

- ✓Training optimization — Providing real-time feedback on a dog's emotional state during training sessions. Trainers can adjust approach, timing, and intensity based on whether the dog is engaged (curiosity), frustrated, or anxious — replacing guesswork with data.

Related Resource

Learn how PEBI integrates with wearable hardware in our Smart Collars & Wearables guide — including real-time HRV monitoring, GPS tracking, and automated behavioral alerts.

How Does PEBI Compare to Human Emotion Recognition?

Canine PEBI models face unique challenges that distinguish them from human emotion AI — but also benefit from certain advantages:

| Factor | Human Emotion AI | Canine PEBI |

|---|---|---|

| Facial complexity | 43 facial muscles, high variation | Fewer muscles but breed-specific variation |

| Vocalization data | Language-dependent, cultural bias | Species-universal bark patterns, less cultural noise |

| Deception | Humans mask emotions deliberately | Dogs rarely mask — signals are more honest |

| Physiological access | Requires clinical wearables | Smart collars provide continuous HRV data |

| Ethical concerns | Surveillance, consent, bias issues | Lower privacy risk, welfare-focused applications |

What Are the Limitations of Current PEBI Technology?

Responsible implementation requires understanding what PEBI models cannot do. These limitations are well-documented by the AVSAB and should guide both clinical and consumer adoption:

- ✓Breed variation — Brachycephalic breeds (pugs, bulldogs, French bulldogs) have fundamentally different facial landmark geometry. Models trained primarily on mesocephalic breeds may misclassify emotions in flat-faced dogs. Breed-specific calibration profiles are improving but not yet universal.

- ✓Individual baseline dependency — A naturally anxious dog's 'calm' may look like another dog's 'anxious.' Personalized baseline calibration (2–4 weeks of continuous data) is required for reliable individual-level classification. Out-of-box accuracy is significantly lower.

- ✓Context blindness — The same behavior in different contexts carries different meaning. A bark at the door differs from a bark during play. Current models are improving contextual awareness through location and time-of-day data, but don't yet match experienced human intuition.

- ✓Not a diagnostic tool — PEBI output is a screening signal, not a clinical diagnosis. All concerning classifications should be confirmed by a veterinary behaviorist. The AAHA explicitly recommends PEBI as a complement to — not a replacement for — professional behavioral assessment.

What's Next for PEBI in 2026 and Beyond?

The PEBI field is evolving rapidly, with several developments expected to reach consumer and clinical markets by late 2026:

- ✓Feline PEBI expansion — Cat emotion models are 2–3 years behind canine systems due to subtler behavioral signals, but new facial action coding systems (CatFACS) are enabling the first reliable feline emotional classifiers.

- ✓Longitudinal emotional health tracking — PEBI platforms that track emotional patterns over months will detect gradual shifts (increasing anxiety, declining joy) that daily snapshots miss — functioning as an early warning system for cognitive decline and chronic pain.

- ✓Veterinary EHR integration — PEBI data will feed directly into electronic health records, giving veterinarians longitudinal emotional baselines alongside physical exam data. The AAHA is developing standardized data formats for this integration.

- ✓Multi-pet household dynamics — Next-generation models will analyze emotional interactions between multiple pets, identifying social hierarchy stress, resource competition, and inter-animal anxiety contagion patterns.

Frequently Asked Questions About PEBI Models

What Should You Do Next?

If you're interested in PEBI-powered tools, start with a smart collar that includes HRV monitoring and activity tracking — these provide the physiological data stream that makes multi-modal emotion detection possible. For dogs with behavioral concerns, ask your veterinarian about technology-assisted behavioral assessment. And explore our Canine Emotions guide for a deeper understanding of the emotional states PEBI models detect — and our Stress Contagion article to understand how your own emotions influence your pet's PEBI profile.